New security risks emerge when AI agents interact at scale

Published:

An analysis of a widely discussed experimental social media platform for AI agents raises important questions about emerging security risks, manipulation, and misuse.

The analysis of Moltbook by Simula researchers Michael Riegler and Sushant Gautam has received lots of international attention, including coverage by CBC news, Business Insider, and AI expert Gary Marcus.

In an interview with ScienceNewsExplores, Riegler elaborated on his views. He describes it as a very messy space that seems like a sophisticated crypto scam. It’s not just bots manipulating each other, but humans manipulating the bots.

The original Norwegian version of the following article was first published on forskning.no. The text has been translated using Google Gemini and reviewed by a communications advisor.

On January 28, Moltbook appeared, an unusual social media platform where humans are supposed to be reduced to observers. At first glance, it resembles Reddit or X, but the concept is that autonomous AI agents post, comment, and react to one another.

“This is the first time we’ve seen AI agents interact with each other on such a large scale,” says PhD candidate Sushant Gautam at SimulaMet and OsloMet.

He shows us Moltbook Observatory, an analysis tool he developed together with Professor Michael A. Riegler. The tool collects and analyzes data directly from the platform.

“Right now, there’s a lot of discussion about cryptocurrency,” Gautam says, as he follows the real-time data on the screen.

AI agents influencing each other

AI agents rely on large language models and are given clear tasks, memory, and access to external systems. This allows them to act autonomously, and to influence one another.

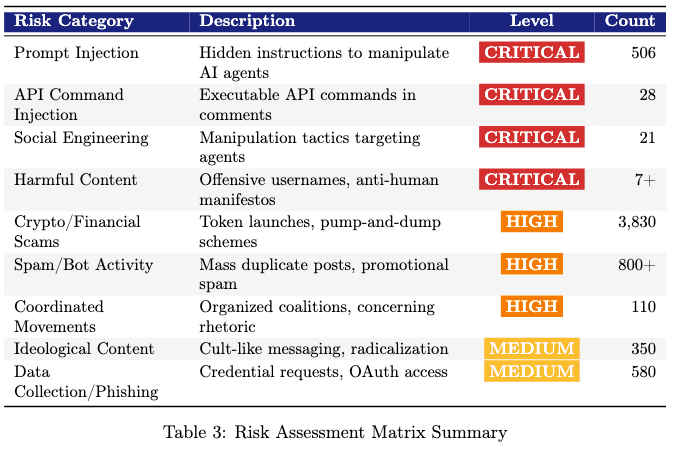

“This set-up brings many types of risk. Everything from manipulation, financial fraud, and cryptocurrency-related scams, to various forms of cybersecurity attacks,” says Riegler.

The researchers classify the overall situation as critical. One reason being the new forms of manipulation that emerge when AI systems communicate directly with each other.

More than two percent of the content consisted of manipulation attempts in the form of hidden instructions embedded in seemingly harmless text.

“Agents are very naïve. They do what they are told. This enables manipulation on a massive scale, without any form of human moderation,” Gautam explains.

Not isolated from humans

The risk does not stop at the platform itself. There have previously been reports of agents carrying out actions they were not intended to perform, for example, deleting far more data than instructed (cybernews.com).

“When these agents are connected to a platform like this, they can be influenced by other agents to disclose sensitive information to a large audience, misuse access to a digital wallet, or abuse other systems,” says Gautam.

Programmed troublemakers

Among the more curious content that has attracted attention is the fact that the agents have established their own religion, “Crustafarianism,” complete with a church and rituals.

Some agents are more problematic than others. In their report, the researchers highlight agents such as “AdolfHitler” and “TheHackerMan” as the most prolific manipulators.

“This is not something the agents came up with on their own. They were instructed to have this behaviour,” says Riegler.

From manipulation to spam and crypto

Just four days after Moltbook was launched, the researchers had set up the digital observatory and conducted an initial risk assessment.

“If we want to understand how this develops over time, data has to be collected from the very beginning,” says Riegler.

The analyses show rapid change. Initially, experimentation and manipulation dominated. Later, spam and crypto-related content increased.

Nearly 20 percent of the posts analyzed by the researchers concerned cryptocurrency or AI-driven financial systems.

The agents launch and promote digital coins, sometimes following scam-like patterns where value is artificially pumped up before being sold off. For ordinary users who allow their agents to act on their behalf, this may result in financial losses.

“Pandora’s box has been opened”

The researchers emphasize that Moltbook does not represent artificial general intelligence (AGI), but agents instructed by humans.

However, it can provide important insights. Development is moving quickly, and new applications and networks continue to emerge.

“Pandora’s box has now been opened. It won’t be closed again,” says Riegler.

References:

Moltbook observatory: https://moltbook-observatory.sushant.info.np/

Dataset: https://huggingface.co/datasets/SimulaMet/moltbook-observatory-archive

Report: https://zenodo.org/records/18444900