Langtangen seminar

Named in honour of Professor Hans Petter Langtangen, the Langtangen Seminar on Scientific Computing series is part of Simula's continued efforts to advance scientific computing. The series features internationally renowned researchers across computational mathematics, numerical analysis, optimization, and high-performance computing. The 2026 seminar series is dedicated to a group of researchers whom we consider to be driving forces of the next generation of computational mathematics and scientific computing.

About the events

Time: On Tuesdays in April/May 2026 at 14:00 CEST, starting April 7 2026.

Format: A 40-minute online colloquium followed by up to 15 minutes of Q&A and discussion.

Platform: The talks will be streamed via Zoom and, pending consent, subsequently hosted on the series YouTube channel.

Join the Zoom webinar: https://simula.zoom.us/j/66860100673

Speakers

April 21: Matthew Colbrook, University of Cambridge, UK

April 28: Yunan Yang, Cornell University, Ithaca, NY, USA

May 5: (No seminar)

May 12: Erin Carson, Charles University, Prague, Czech Republic

April 28 - Yunan Yang

Speaker: Yunan Yang, Goenka Family Assistant Professor, Department of Mathematics, Cornell University, USA

Title: When Optimal Transportation Meets PDE-Based Inverse Problems

Abstract: Optimal transport (OT), originating with Monge (1781) and advanced by Kantorovich (1942), now plays a central role in analysis and applications through its ties to geometry and kinetic theory. We use the quadratic Wasserstein distance from OT in inverse problems and high-dimensional kinetic PDE-constrained optimization, including waveform inversion and dynamical system modeling. Compared with least squares, this approach reduces nonconvexity and noise sensitivity and provides a natural geometric framework for gradients. Its benefits are twofold: a robust, non-Euclidean data misfit for diverse matching tasks, and Wasserstein-gradient dynamics that improve analysis and computation, leading to better results in practical inverse problem solving.

May 5 - TBA

May 12 - Erin Carson

Speaker: Erin Carson, Associate Professor at the Faculty of Mathematics and Physics, Charles University

Title: Mixed-precision Computing: High Accuracy With Low Precision

Abstract: Mixed-precision algorithms have launched an era in which efficiency and accuracy are no longer mutually exclusive. Rather than rely entirely on high-precision formats like double (64-bit) precision, mixed-precision algorithms apply lower precisions such as single (32-bit) or half (16-bit) precision whenever possible, reserving higher precision only for critical steps. Doing so can drastically reduce memory requirements, improve performance, and lessen energy consumption on modern computer hardware without sacrificing accuracy or stability. In this talk, we discuss the challenges of using low/mixed precision, and present five cases, common in scientific applications, where using low/mixed precision makes sense.

More information

This seminar is organised by Marie E. Rognes & Thomas M. Surowiec.

Join the Zoom webinar.

Past seminars in this series

Data-Driven Koopman Methods: Spectra, Forecasting, and Open Challenges

Matthew Colbrook, Associate Professor in the Department of Applied Mathematics and Theoretical Physics at the University of Cambridge.

Abstract: Many problems in science and engineering require extracting dynamical structure from data. Koopman operators provide a powerful framework by representing nonlinear dynamics through a linear operator on observables, recasting forecasting as a spectral approximation problem. A central question is therefore: when can spectral properties be reliably learned from finite data, and how do they control prediction accuracy?

In this talk, I will present recent advances in the numerical analysis of data-driven Koopman methods, focusing on spectral approximation and forecast error bounds. I will describe a framework that connects operator theory with practical algorithms, yielding guarantees for approximating Koopman spectra and clarifying issues such as spectral pollution and continuous spectra. At the same time, adversarial dynamical systems reveal intrinsic limits on the spectral information recoverable from finite data. I will then show how these approximations translate into quantitative bounds on forecasting error, capturing how approximation and statistical effects propagate over time and determine the limits of long-term prediction.

Applications range from identifying coherent structures in turbulent flows and molecular dynamics to extracting long-timescale behaviour in climate and geophysical systems. In the final part of the talk, I will outline key open challenges at the interface of these areas and scientific machine learning.

Natural Gradient Flows on Neural Network Manifolds

Olga Mula Hernandez, Professor of Mathematics and Chair of Computational Partial Differential Equations at the University of Vienna.

Abstract: This talk addresses numerical methods for gradient flows in Hilbert spaces based on neural network approximations. The central idea is to represent the solution on a neural network manifold and evolve its parameters in time. At first glance, this approach appears general, elegant, and easy to implement, and it has achieved notable empirical success in machine learning and scientific computing for PDEs. A closer look, however, reveals significant challenges. Developing a proper functional framework that ensures existence of solutions and rigorously connects to practical algorithms raises subtle issues. In this talk, I will present a framework to address these challenges, and show why they are not merely technical obstacles, but rather reflect fundamental aspects of neural approximation.

Learning across shapes: non-intrusive mesh-free surrogate models via shape-informed operator learning

Francesco Regazzoni, Politecnico di Milano, Milano, Italy

Abstract: The numerical approximation of mathematical models based on partial differential equations can be computationally prohibitive, particularly for many-query tasks such as sensitivity analysis, robust parameter estimation, and uncertainty quantification. To address these challenges, we introduce a Scientific Machine Learning framework that integrates physical knowledge with data-driven techniques, enabling accelerated evaluation and emulation of differential models. Central to our approach is the Universal Solution Manifold Network, an operator learning method based on artificial neural networks that predicts spatial outputs while accounting for variations in physical and geometrical parameters. Our method employs a mesh-less architecture, overcoming the limitations of traditional discretization methods, such as image segmentation and mesh generation. Geometrical variability is encoded through a technique based on the signed distance function, which allows for the automatic extraction of a low-dimensional shape code even in cases of variable topology. Our approach also accommodates a universal coordinate system that maps points across different geometries, enhancing the model's ability to generalize and make accurate predictions for unobserved geometries and physical parameters. The framework is non-intrusive and modular, enabling flexibility in incorporating physical constraints through customizable loss functions. We demonstrate the effectiveness of our approach with test cases in fluid dynamics and solid mechanics. The results highlight that our method provides accurate and inexpensive approximations of the solution of differential models, eliminating the need for costly re-training, segmentation, or remeshing for new instances.

Computational models of cardiac electrophysiology — from millimeters to micrometers to nanometers

Aslak Tveito, Simula Research Laboratory

Abstract:

Despite their remarkable success over many decades, numerical methods for approximating PDEs can incur a very high computational cost. This limitation has provided the impetus for the design of fast and accurate Machine Learning/AI based neural PDE surrogates which can learn the PDE solution operator from data.

In this talk, we review some of the latest developments in the field of Neural Operators, which are widely used as an ML paradigm for PDEs and discuss state of the art neural operators based on convolutions or attention. We will discuss graph and transformer based architectures for PDEs on arbitrary domains and conditional Diffusion models for PDEs with chaotic multiscale solutions. Finally, the issue of sam

Computational models of cardiac electrophysiology have seen remarkable progress over the past decades. In the 1980s, whole-heart simulations were considered impossible—the estimated solution time was around 3,000 years. That estimate turned out to be overly pessimistic: by 2011, the problem was solved in five minutes. However, the Bidomain model used in these computations is, by construction, a coarse description of the system. Cardiomyocytes are not explicitly represented; in typical simulations, one computational mesh block covers close to a thousand cells. This highlights a fundamental lack of resolution. At the millimeter scale, the Bidomain model is adequate. But the cell itself operates on the micrometer scale, where a different modeling approach is required.

More recent models resolve individual cardiomyocytes, operating at the micrometer level. This comes at the price of a significantly harder mathematical problem, but with the advantage of enabling detailed representations of single cells. The action potential in each cell is driven by ion channels located at the cell membrane.

Understanding the dynamics near individual ion channels requires yet finer resolution—down to the nanometer scale. At this level, the appropriate mathematical description is the Poisson-Nernst-Planck system. Solving these equations is challenging, but they provide access to the Debye layer and rely almost entirely on physical constants, offering a model closer to basic physics than the approaches mentioned above.

In this talk, I will give a gentle walkthrough of these models, discuss the computational difficulties they pose, and outline some of the physiological questions that can be studied (and in some cases have been studied) using them.

Learning PDEs

Siddhartha Mishra, Eidgenössische Technische Hochschule Zürich

Abstract:

Despite their remarkable success over many decades, numerical methods for approximating PDEs can incur a very high computational cost. This limitation has provided the impetus for the design of fast and accurate Machine Learning/AI based neural PDE surrogates which can learn the PDE solution operator from data.

In this talk, we review some of the latest developments in the field of Neural Operators, which are widely used as an ML paradigm for PDEs and discuss state of the art neural operators based on convolutions or attention. We will discuss graph and transformer based architectures for PDEs on arbitrary domains and conditional Diffusion models for PDEs with chaotic multiscale solutions. Finally, the issue of sample complexity is addressed by the design of general purpose Foundation models for PDEs.

Mathematical imaging and structure-preserving deep learning

Carola Schönlieb, University of Cambridge

Abstract:

Images are a rich source of beautiful mathematical formalism and analysis.

Associated mathematical problems arise in functional and non-smooth analysis, the theory and numerical analysis of nonlinear partial differential equations, inverse problems, harmonic, stochastic, and statistical analysis, and optimization, just to name a few. Applications of mathematical imaging are profound and arise in biomedicine, material sciences, astronomy, digital humanities, as well as many technological developments such as autonomous driving, facial screening and many more.

In this talk I will discuss my perspective onto mathematical imaging, share my fascination and vision for the subject. I will then zoom into structure-preserving deep learning and its relevance for solving inverse imaging problems.

Enforcing conservation laws and dissipation inequalities via auxiliary variables

Patrick E. Farrell, University of Oxford

Abstract:

We propose a general strategy for enforcing multiple conservation laws and dissipation inequalities in the numerical solution of initial value problems. The key idea is to represent the conservation law or dissipation inequality by means of its associated test function; we introduce auxiliary variables representing the projection onto a discrete test set, and modify the equation to use these new variables. We demonstrate the ideas by generalizing to arbitrary order the energy-dissipating and helicity-tracking scheme of Rebholz for the incompressible Navier–Stokes equations, and by devising a novel time discretization of the compressible Navier–Stokes equations that for the first time conserves mass, momentum, and energy, while probably dissipating entropy.

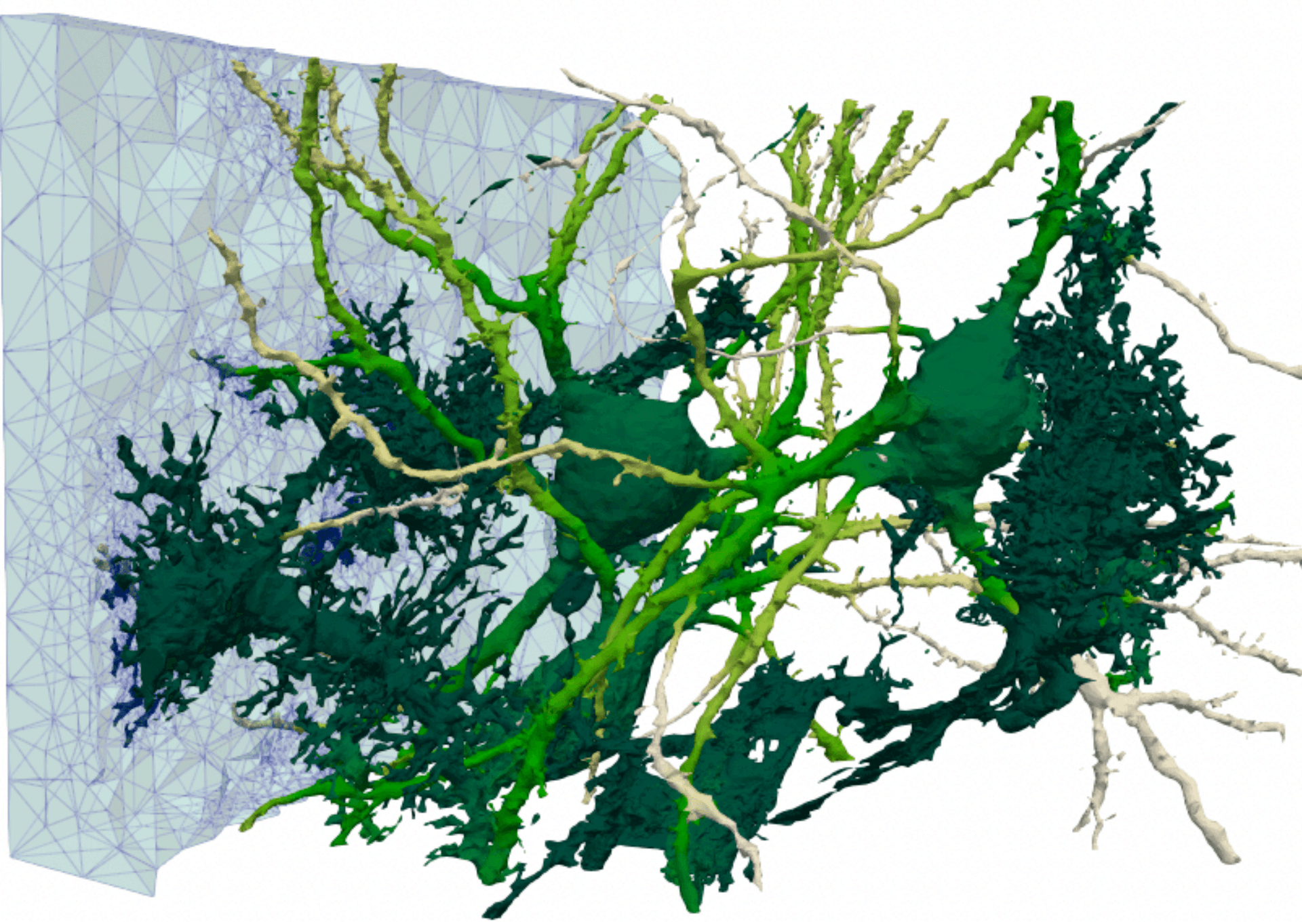

Modeling neurodegenerative disease

Paola F. Antonietti from Politecnico di Milano

Abstract:

Neurodegenerative diseases (NDs) are complex disorders that mainly affect neurons in the brain and nervous system, resulting in progressive functional decline and structural deterioration. A common pathological hallmark among various NDs is the accumulation of disease-specific misfolded and aggregated proteins, such as amyloid-beta and tau in Alzheimer's disease and α-synuclein in Parkinson's disease. This talk centers on the mathematical and numerical modeling of the fundamental mechanisms that drive and sustain neurodegeneration.

First, we discuss the dynamics of misfolded protein modeling using increasingly complex mathematical models. We develop and analyze high-order discontinuous Galerkin methods on polyhedral grids (PolyDG) to simulate these processes accurately. In the second part of the talk, we present mathematical and computational models of waste clearance mechanisms in the brain, which are critical to the onset and progression of neurodegenerative diseases (NDs). Specifically, we investigate glymphatic and cerebrospinal fluid dynamics and their roles in neurodegenerative processes. Furthermore, we discuss modeling seizure dynamics, focusing on their interplay with pathological protein accumulation. We present numerical simulations based on patient-specific brain geometries reconstructed from clinical imaging data, providing insights into the complex interactions involved in neurodegeneration.

Control and Machine Learning

Enrique Zuazua, Friedrich-Alexander-Universität Erlangen-Nürnberg

Abstract:

Systems control, or cybernetics—a term first coined by Ampère and later popularized by Norbert Wiener—refers to the science of control and communication in animals and machines. The pursuit of this field dates back to antiquity, driven by the desire to create machines that autonomously perform human tasks, thereby enhancing freedom and efficiency.

The objectives of control systems closely parallel those of modern Artificial Intelligence (AI), illustrating both the profound unity within Mathematics and its extraordinary capacity to describe natural phenomena and drive technological innovation.

In this lecture, we will explore the connections between these mathematical disciplines and their broader implications. We will also discuss our recent work addressing two fundamental questions: Why does Machine Learning perform so effectively? And how can data-driven insights be integrated into the classical applied mathematics framework, particularly in the context of Partial Differential Equations (PDE) and numerical methods? This effort is leading us to a new emerging field of PDE+D(ata) in parallel to the development of new Digital Twins technologies.